For Teachers By Teachers

Stay current with the best K-12 literacy practices, curated in a way you can access and use now.

Join the Choice Literacy community today!

- Access over 3000 Choice Literacy Articles

- Access over 900 Choice Literacy Videos

- Access to Courses and Live Events

Membership

BE CONFIDENT

Know you are using vetted literacy workshop practices.

ENGAGE STUDENTS

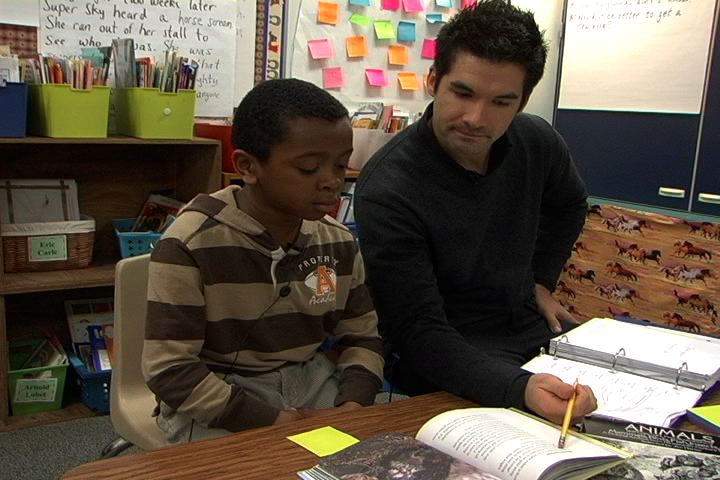

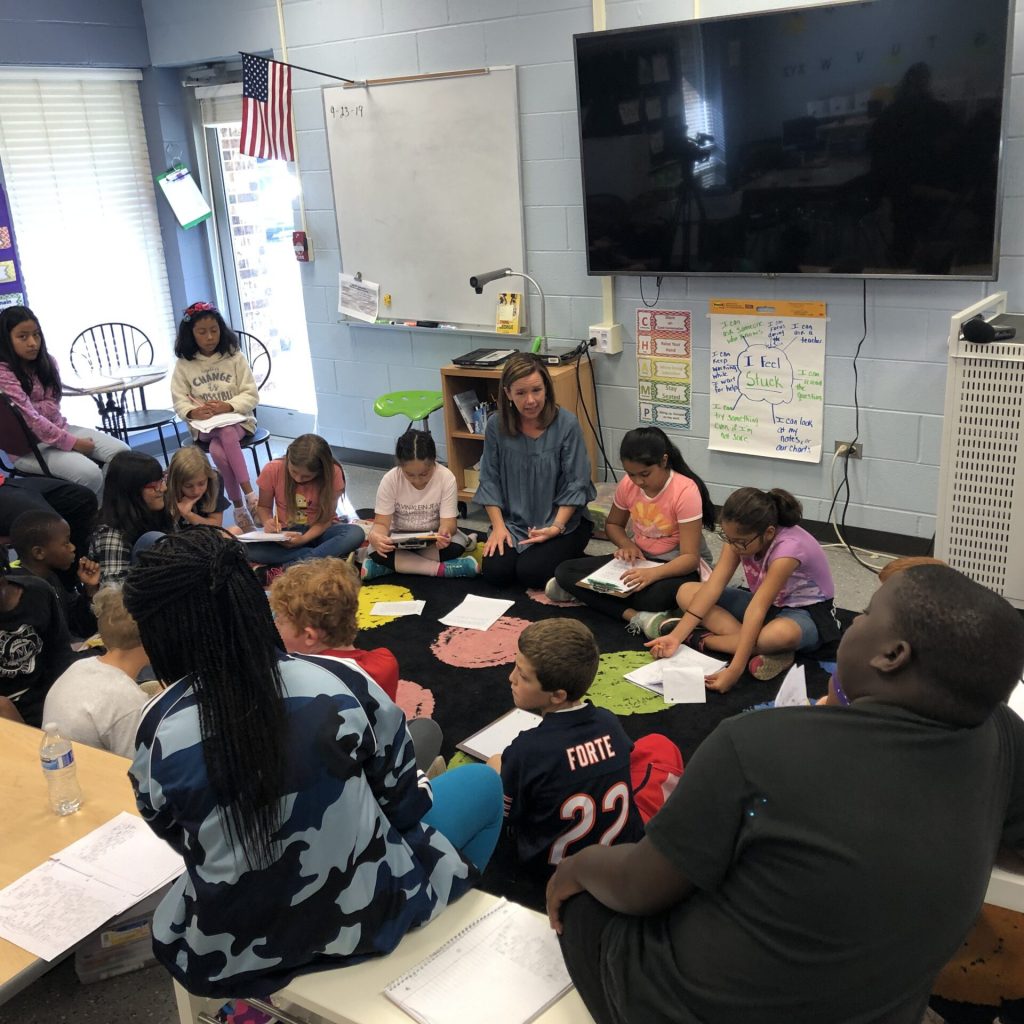

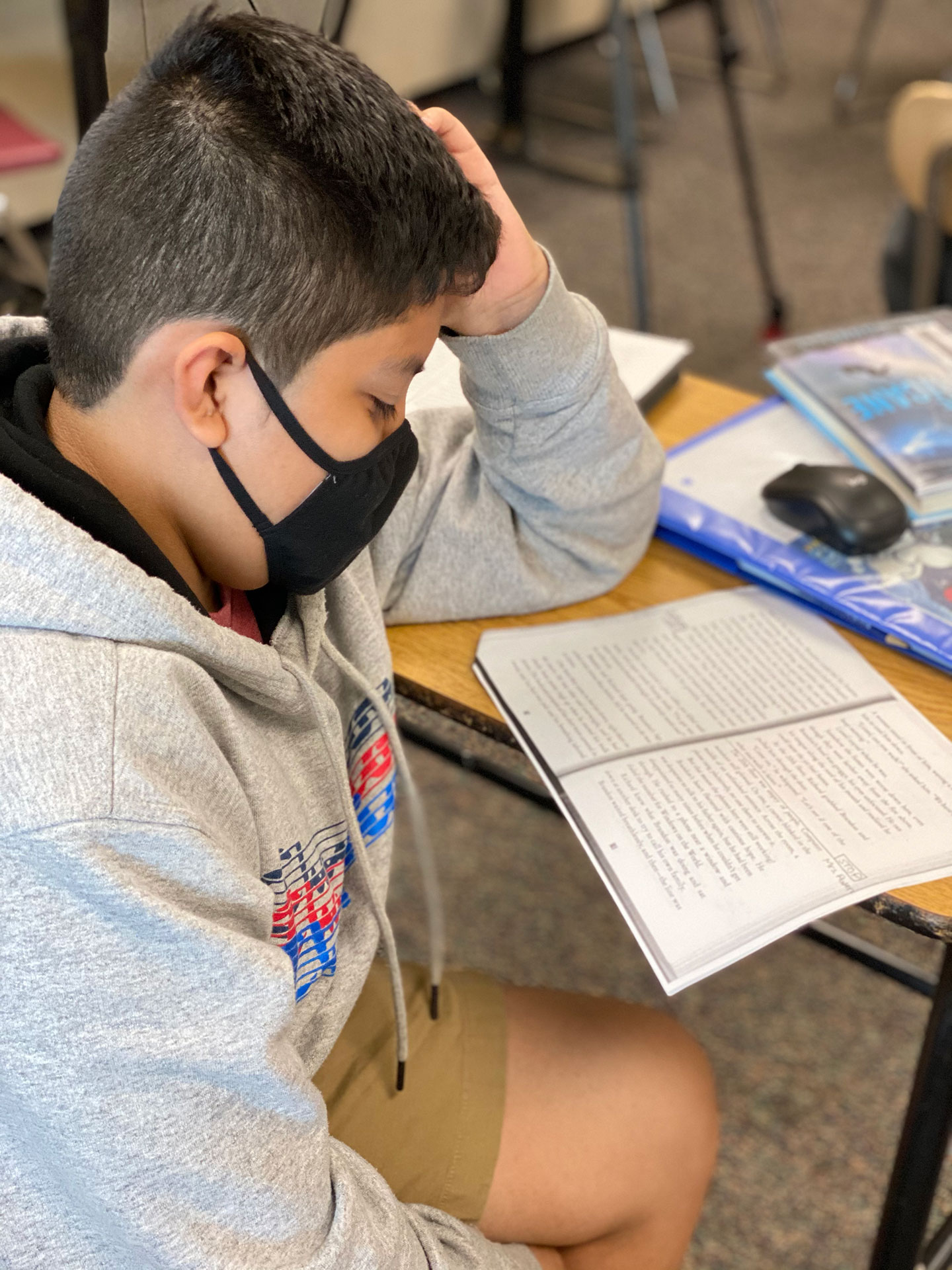

Growth happens when students are active learners.

CHANGE LIVES

Tailor your literacy instruction to the needs of your students.

Are you overwhelmed trying to keep up with the latest ideas?

Planning meaningful literacy instruction takes time. You want more than good ideas. You want to access the right idea when you need it. You can save hours of scouring the web for tricks and scripts.

Be prepared to meet the needs of every student as a reader and writer.

You are an expert professional. With access to an organized multimedia library of articles, videos, and courses you’ll have the right resources to determine how to meet the unique needs of each student.

ENGAGED AND JOYFUL STUDENTS

You are mastering the art and science of literacy instruction by intentionally planning for your students.

PROFESSIONAL

CONNECTIONS

Our contributors are in-the-field educators and enjoy connecting with you.

AMAZING

GROWTH DATA

When you meet students at their points of need, they grow as readers and writers.

The Right Idea When You Need It

We understand the pressures to reach a variety of learning needs and not enough time to do it. You want resources, but too many can be overwhelming. We organize our comprehensive resource library so it is easy to access practical ideas to tailor literacy instruction for your students.

MULTIMEDIA LIBRARY

Each week several new features are published and added to the multimedia library of more than 5000 articles, videos, and courses about essential literacy practices.

DIVERSE SCHOOLS

Our content features a variety of student populations and teaching situations from around the globe.

IN-THE-FIELD CONTRIBUTORS

There are more than 150 teachers and literacy leaders who contribute to the library. Most carry full-time contracts with schools.

SINCE 2006

We have been publishing the best literacy practices since 2006 and continue to add to the library 48 weeks of the year.

MEET SOME CONTRIBUTORS

Stephanie Affinito

author of leading literate Lives(2021) and literacy coaching(2018)

Stephanie is an educator in the Department of Literacy Teaching and Learning at the University at Albany.

Franki Sibberson

Author of digital reading (2018); still learning to read (2nd edition,2016) and many more

Franki is a thought leader in literacy and a past president of the National Council of Teachers of English (NCTE).

Tammy Mulligan

co-AUTHOR OF It's all about the books(2021) AND assessment in practice (2018)

Tammy is an elementary teacher in Massachusetts.

MELANIE MEEHAN

AUTHOR OF THE RESPONSIVE WRITING TEACHER (2021) AND EVERY CHILD CAN WRITE (2019)

Melanie is an Elementary Writing and Social Studies Coordinator in Connecticut.

Ruth Ayres

AUTHOR OF enticing hard-to-reach writers (2018) AND more

Ruth is the editor in chief of the Choice Literacy site and the director of professional learning for a consortium of schools in northern Indiana.

Matt Renwick

author of reading by example(substack) and leading like a coach (2022)

Matt is an elementary principal in Wisconsin.

Jen Schwanke

Author ofThe Principal ReBoot: 8 Ways to Revitalize Your school leadership (2020) and You're the principal! Now what? (2018)

Jen is an administrator for Dublin City Schools in Ohio.

What Our Members Say

Membership Options

Empower Students with the Best K-12 Literacy Practices

Classic Classroom (Month)

-

Access the Classic Library curated for teachers

Classic Classroom (Year)

-

Access the Classic Library curated for teachers

-

Access to Classic Classroom Courses

Literacy Leader

-

Access the Classic Library

-

Access the Leaders Lounge which is filled with resources for literacy leaders

-

Access to all Courses

Group

-

Discounted access to the Classic Library + Leaders Lounge

-

For teams of three or more

Let's make it simple.

DECIDE

Decide to tailor literacy instruction to the needs of your students.

BE

Be part of a community with access to the best K-12 literacy practices.

EMPOWER

Empower students as readers and writers.

Educators want to stay fresh with literacy instruction, but are so busy with students they don't always have the time.

Choice Literacy publishes and delivers the best K-12 literacy practices so that educators can empower their students as readers and writers.

At Choice Literacy we know that you want to be an educator who makes students’ lives better through literacy. In order to do that, you need access to comprehensive literacy resources delivered in a way that you will actually use. The problem is it’s hard to have time to prepare meaningful literacy instruction to meet the diverse needs of your students which makes you feel overwhelmed. We believe it’s plain wrong to turn to tricks and scripts for literacy instruction.

With over 150 in-the-field contributors, we understand the pressure to reach a variety of needs and not enough time to do it. This is why we hold true to workshop tenets like choice and ownership and share practical ways to plan and deliver literacy instruction straight to the point of student need.

Here’s how to do it:

- Decide to make every planning moment matter.

- Be part of a community with access to the best K-12 literacy practices.

- Engage students as readers and writers.

So, become a Choice Literacy member. In the meantime, join the Big Fresh newsletter to stay current and have exclusive ideas dropped in your inbox each week. You can stop grasping for unsatisfying activities and instead be confident that you are growing students as readers and writers with choice in literacy.

The Big Fresh

Each week we release exclusive content to our Big Fresh subscribers. Get access to free articles focused on the trends of K-12 literacy instruction and leadership.

Free Content

View some of our free articles and videos to sample Choice Literacy content.

Choice Literacy Membership

Articles

Get full access to all Choice Literacy article content

Videos

Get full access to all Choice Literacy video content

Courses

Access Choice Literacy course curriculum and training